Search the Community

Showing results for tags 'gpt-2'.

-

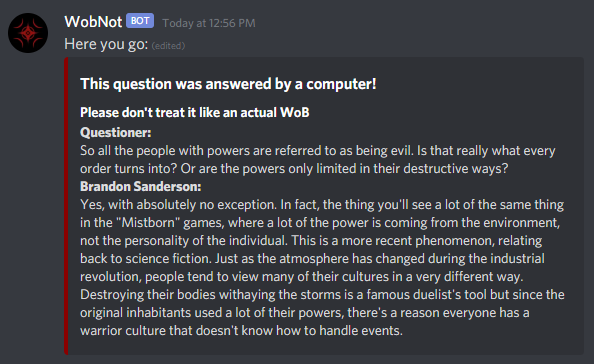

Introduction If you're on the 17th Shard Discord, you may have seen a few memes like these over the past couple of weeks: These posts have all been created by a new Discord bot I've been working on called WobNot. You can think of WobNot as a version of WoBBot that's been enlightened by Sja-anat. Its function is essentially the same, you ask a question and it gives you an answer. However, instead of getting responses from Arcanum, WobNot uses a GPT-2 model that's been trained on a large database of actual WoBs to create artificial WoBs (NotWoBs) in real time. How it works With a GPT-2 language model, the language is broken up into thousands of "tokens", representing strings of characters, ranging from individual letters to entire words or short, common phrases. The base GPT-2 model, which is publicly available. was trained on a massive amount of text to create links between these tokens, and uses a neural network to determine which token should come next based on the previous tokens. This base model covers most of the basics of English grammar, and allows text generation that is mostly sensible, though very widely varied. The neural network can be further trained on specific texts to allow for generation that more closely resembles that text. In this case, I trained it on a specially formatted database of all the WoBs on Arcanum (as of 11/20/2020). Because every WoB in the database followed the same formatting, the retrained model quickly learned to produce text in that same format. Favorite NotWobs so far WobNots responses are often clearly related to the Cosmere, but vary widely in how on-topic they are to the actual question. A handful of responses are surprisingly accurate to how Brandon would actually answer them: Other responses are still on topic, but far less sensible: And, as is always the case with AI, some responses are completely off the rails: One of the unplanned aspects of WobNot is that, because it was trained on WoBs that include conversations, it can generate more text from the questioner: Under the same reasoning, WobNot can also generate questions as well as answers: Finally, because a handful of WoBs on Arcanum are quotes, readings, or annotations from Brandon, it can also generate quotes without any input. How to use WobNot Right now, WobNot can only be used on a special Discord server specifically dedicated to testing it, WobNot's Cave. I may release it into the wild at some point, but until it's better polished, and I have a better system for hosting the fairly-intensive GPT-2 model, I think it's best to keep it in one place, where it's easier to manage. Have fun asking WobNot whatever random questions you've always wanted to ask Brandon, and post your favorite NotWoBs in this thread!

- 12 replies

-

19